How It Works

Have you ever wondered what artificial intelligence sees when it looks at a photo of a face? AttractivenessTest.com offers a fascinating window into the world of AI-driven facial analysis. Our platform leverages sophisticated deep learning models to provide a score that reflects the key features influencing human perception of attractiveness.

Our Technology at a Glance

At the heart of our platform is a sophisticated system built on state-of-the-art computer vision and deep learning. The process is designed to be fast, accurate, and secure, delivering insightful results in seconds. Here’s a step-by-step look at how it works.

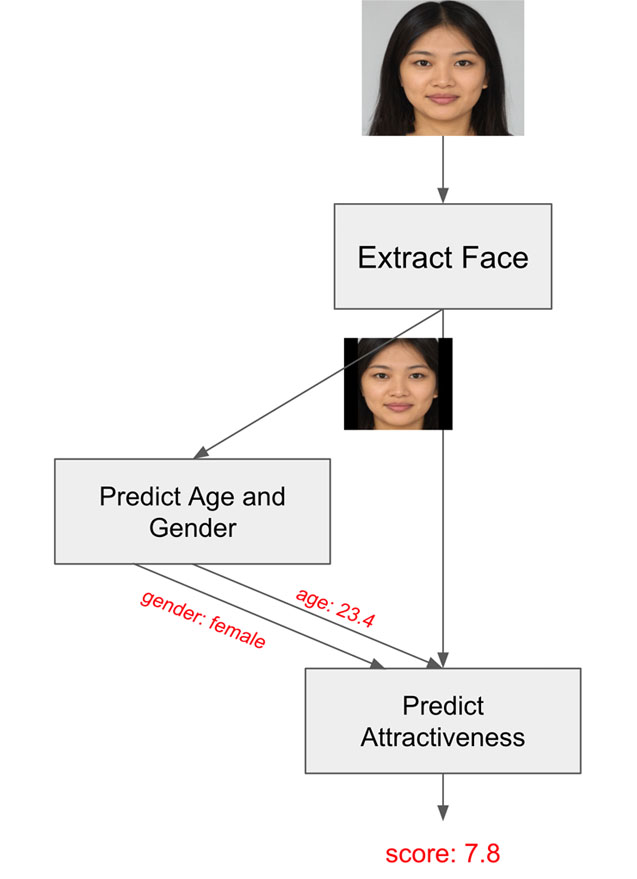

Step 1: Precision Face Detection

The journey begins the moment you upload your photo. Our first algorithm meticulously analyzes the image to detect and isolate the face. By focusing exclusively on facial features and cropping out any distracting backgrounds, we ensure the highest level of accuracy for our analysis. This precision is the foundation for a reliable score.

Step 2: Facial Landmark Mapping

Once the face is isolated, our AI identifies 400 keypoints, or facial landmarks. These landmarks map the unique contours of your face, including the eyes, nose, mouth, jawline, and eyebrows. This detailed facial map allows us to calculate various geometric features, such as symmetry, proportions, and the spacing between different elements of the face.

Step 3: Understanding Context with Age and Gender Analysis

To provide a more nuanced and meaningful evaluation, our next model estimates the age and gender from the facial data. Our research shows that incorporating this context allows our AI to deliver a more personalized and accurate attractiveness score, tailored to key demographic characteristics.

Step 4: The Attractiveness Score

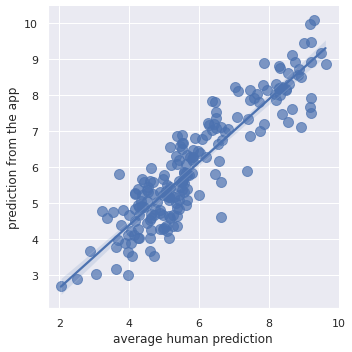

This is where the magic happens. The processed facial image, enriched with keypoint data, age, and gender, is fed into our core deep neural network. This powerful model, built on a state-of-the-art architecture, has been trained on a vast and diverse dataset of images.

Through a process known as supervised learning, the AI learned the subtle patterns and features that correlate with human perceptions of attractiveness. This extensive training enables our system to generalize its knowledge and provide a consistent score for new faces it has never seen before.

Attractiveness AI

We recently launched our new website, attractivenessai.com, which extends the attractiveness test with more detailed results and personalized recommendations. It uses the same core technology but offers a host of new features. Check it out to dive deeper into your analysis!

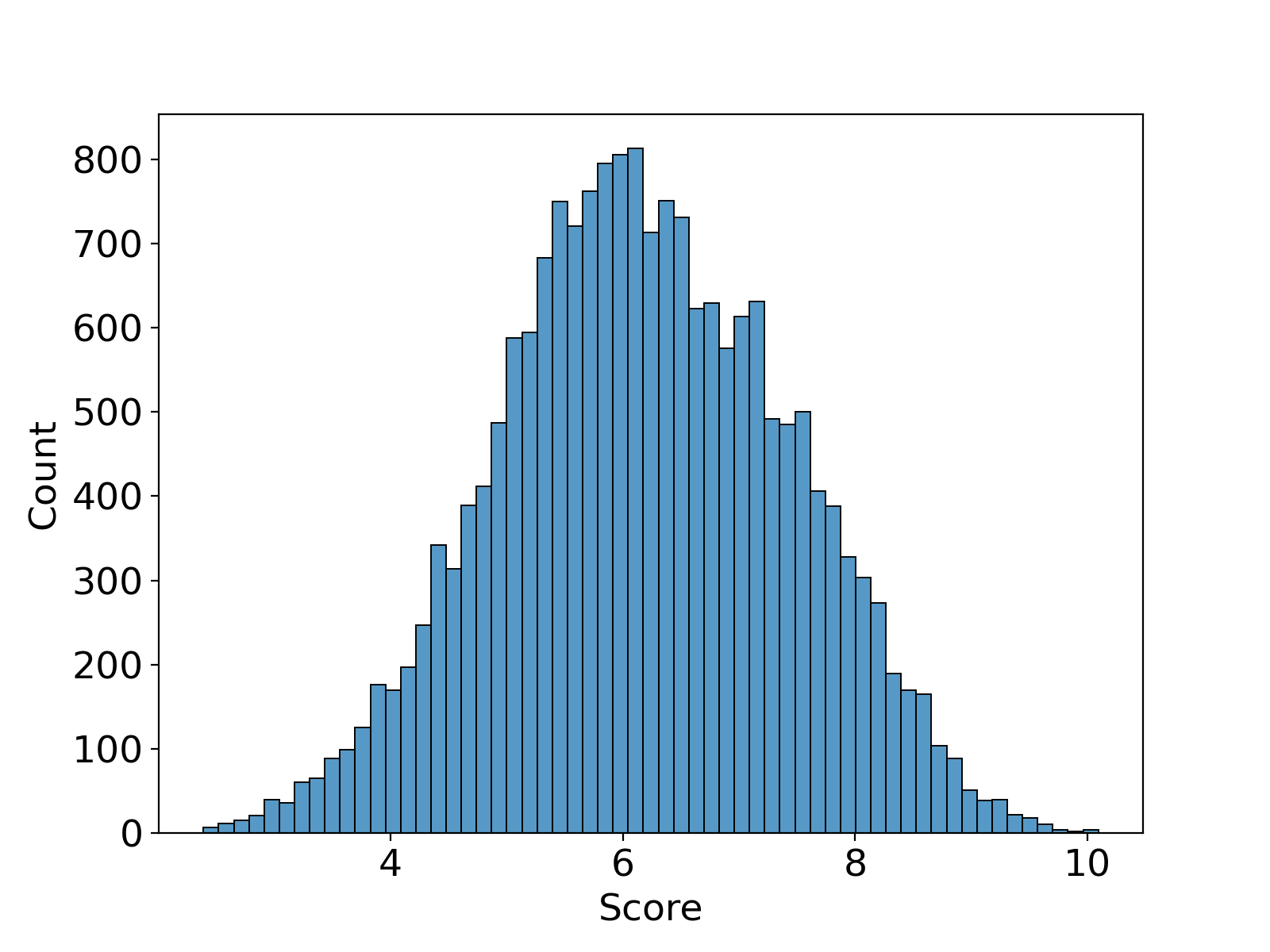

How Do You Compare?

Curious about where your score stands? The chart below shows the distribution of results from over 100,000 real user predictions. The average score is 6.1, with a standard deviation of 1.35. This gives you a broader context for your own results and a fascinating look at the collective data.

The Platform Powering Your Results

To deliver scores quickly and reliably, we've built our platform on the robust and scalable Google Cloud Platform. Our entire system—from the face detection algorithms to the web application itself—is engineered for performance and security. This world-class infrastructure ensures that whether you're visiting our website or using our mobile app, you receive a seamless and instantaneous experience. Your privacy is paramount; we process your photos securely and never save or publish them.

Our Commitment to Innovation

At AttractivenessTest.com, we are passionate about the intersection of technology and human perception. We are continuously refining our algorithms and expanding our datasets to make our AI even more accurate and insightful. Our goal is to provide a fun, engaging, and transparent platform that demystifies the science of attractiveness.

How Pretty am I?

Try our AI-powered attractiveness test - it's quick, accurate, and free!

Try the Attractiveness Test